-

Products & Services

Platform

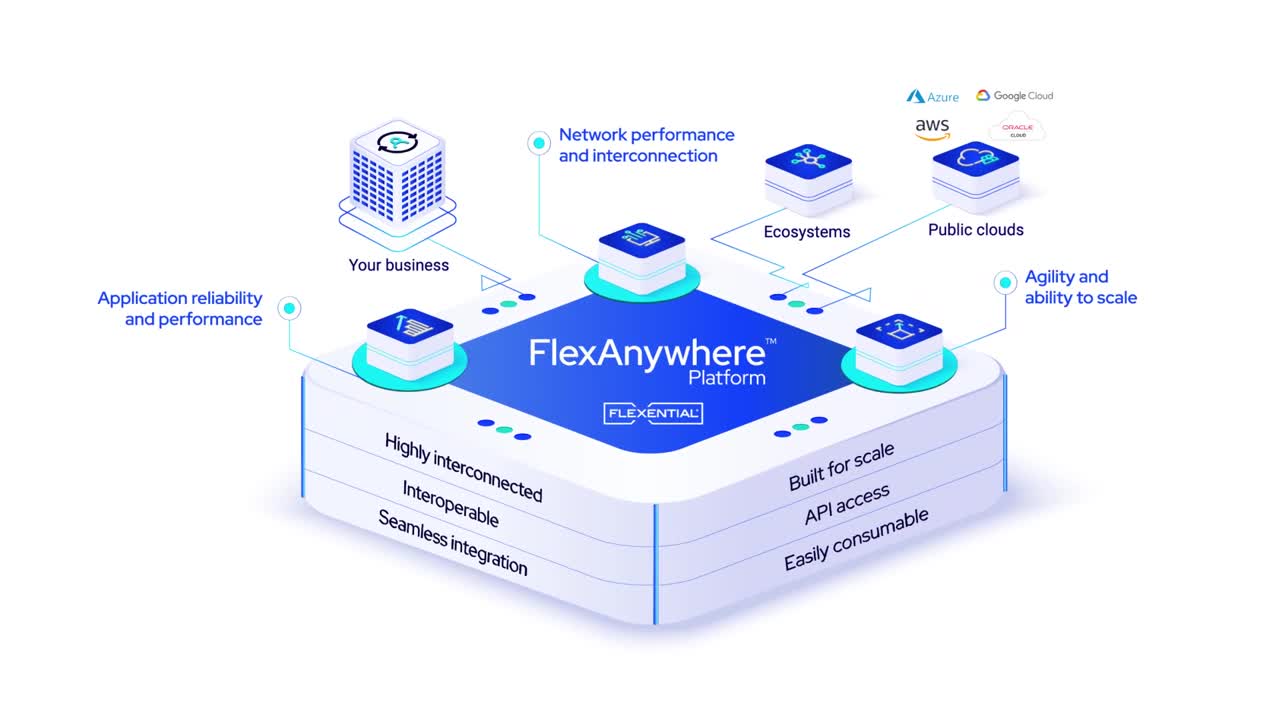

FlexAnywhere PlatformThe FlexAnywhere™ platform is the Flexential integrated set of capabilities including colocation, cloud, connectivity, data protection, managed and professional services which delivers tailored IT solutions that strengthen your business capabilities.

- Products & Services

- Colocation Benefit from secure, highly efficient data center colocation service offering high-density power capabilities and geographic proximity for the utmost control of distributed architectures.

- Interconnection Access hundreds of carriers, hyperscale cloud providers and hybrid IT networks to enable your interconnection needs for both wired and wireless.

- Cloud Solve complex hybrid IT challenges and support long-term business growth by implementing private, public and hybrid cloud solutions.

- Data Protection Protect your data, applications and infrastructure from disruptions using disaster recovery solutions as unique as your business requirements.

- Professional Services Accelerate your IT journey with consultative, end-to-end engagements that solve your most complex transformation, security and compliance challenges.

- placeholder

- Interconnection Access hundreds of carriers, hyperscale cloud providers and hybrid IT networks to enable your interconnection needs for both wired and wireless.

- Professional Services Accelerate your IT journey with consultative, end-to-end engagements that solve your most complex transformation, security and compliance challenges.

Streamline your cloud infrastructure and see how to optimize TCO in a no-cost, no-obligation workshop

-

Solutions

- FLEXANYWHERE SOLUTIONS

- Improve Network Performance and Interconnection Move data reliably, quickly, and securely

Platform

FlexAnywhere PlatformThe FlexAnywhere™ platform is the Flexential integrated set of capabilities including colocation, cloud, connectivity, data protection, managed and professional services which delivers tailored IT solutions that strengthen your business capabilities.

Forrester shares the top 10 trends in edge computing and IoT

-

Data Centers

Data Center Locations

Explore our robust portfolio of data center facilities across 19 national markets

Book a Data Center TourTake a tour of one of our 41 highly connected data centers

- Northeast

- Allentown, PA

- Philadelphia, PA

- Richmond, VA

Explore emerging trends, strategies, and challenges of data centers in the AI era

-

Resources

-

Company

Latest News

- Register leads

- Download sales and marketing resources

- Access online training

- View on-demand webinars

Flexential Xperience Portal (FXP) provides a seamless and unparalleled digital customer experience to monitor and manage your hybrid IT environment in real time. You can:

- Visualize and manage the performance of all your environment and services within Flexential in real time

- Purchase additional services on-demand

- Request customer support 24/7/365